Higher Education AI Search Strategy: What Students Expect vs. How Institutions Must Adapt

December 2nd, 2025 by

Key Insights

- Students have shifted how they search.

Prospective learners now use AI tools alongside traditional search, making structured, consistent program information essential for visibility. - Institutional readiness lags student behavior.

Many colleges recognize the influence of AI search but still lack the systems and processes to monitor and improve their presence in generative results. - An AI search strategy requires core operational alignment.

Institutions must unify program data, structure pages for AI readability, reinforce entity signals, and maintain ongoing data hygiene to stay competitive.

Half of prospective students use AI tools weekly. Nearly 80% read Google’s AI Overviews before clicking a single search result.

For most higher education institutions, that means students are forming opinions about your programs before they ever reach your website. If your information isn’t structured for AI retrieval, you’re invisible during the moment that matters most.

The UPCEA x Search Influence AI Search in Higher Education Research Study tracked how students search for programs in 2025. A parallel Snap Poll of 30 UPCEA members measured institutional readiness. Together, they reveal a sector-wide gap: students have moved to AI-assisted search faster than colleges have adapted their content strategies.

This guide breaks down how students search today and outlines the four operational components institutions need to compete for visibility in AI search.

The New Student Discovery Model and Its Impact on AI Search Visibility

Higher ed has spent decades optimizing solely for traditional search engines. The challenge now is optimizing for how students actually gather information. That behavior looks much different today than it did even two years ago.

Students want direct answers, quick comparisons, and credible signals, and they toggle between tools to get them. Generative AI fits naturally into that pattern because it delivers instant interpretation without requiring students to click through multiple pages or sift through fluffy marketing copy.

AI tools have become routine

What once felt like experimental search is now embedded in the routine research process.

- 50% of prospective students use AI tools weekly

- 79% read AI Overviews before clicking a single blue link

AI compresses the “orientation” phase of search, the stage where students try to understand what a program involves and which institutions align with their goals. Historically, that moment used to happen on your website. Now it happens in a summary box before a student decides whether your program is worth investigating further.

If that summary box is inconsistent or simply missing your institution entirely, you’ve lost visibility at a moment that shapes early impressions.

Students layer Google, YouTube, & AI together

Even with all the AI buzz, students aren’t abandoning traditional search engines in their search for professional and continuing education (PCE) programs. They’re simply supplementing them.

- 84% still use traditional search for core information

- 61% use YouTube to explore programs visually

- 50% use AI tools for context and comparison

This creates a layered research journey where each channel serves a specific function:

- AI platforms provide the first pass of understanding — condensing program details and requirements, and fitting them into digestible summaries

- Google (and other search engines) expand the options — surfacing alternative programs, comparison articles, and third-party reviews

- YouTube shapes expectations and emotional resonance — showing campus life, faculty interviews, and student testimonials

- Institution websites verify credibility — confirming details, checking accreditation, and exploring outcomes data

Consistency in presence and messaging across each channel is the new baseline for visibility.

Universities still hold trust, but AI sets the stage

Despite the rise of new tools, institutions remain the source students trust most. 77% rely on university websites to verify program information.

But in many cases, students don’t start with you. They end with you.

A very typical sequence now looks like this:

- AI-generated overview or citation → Initial understanding and shortlist formation

- Verification on .edu pages → Credibility check and detail confirmation

- Shortlist decisions → Final comparison and enrollment consideration

AI shapes the expectation. Your website proves (or disproves) the details. Accuracy and clarity must exist in both places for a student to move forward. (Example: If the AI search results say your MBA is 18 months and your program page says 24 months, the friction kills momentum.)

This reversed discovery model has profound implications for content strategy. You can no longer afford to treat your website as the sole first impression. It’s now the verification step. And if AI has already set the wrong expectation, your website becomes a correction tool instead of a conversion tool.

Source: UPCEA x Search Influence — AI Search in Higher Education: How Prospects Search in 2025

Institutional Readiness: Where Colleges Stand in 2025

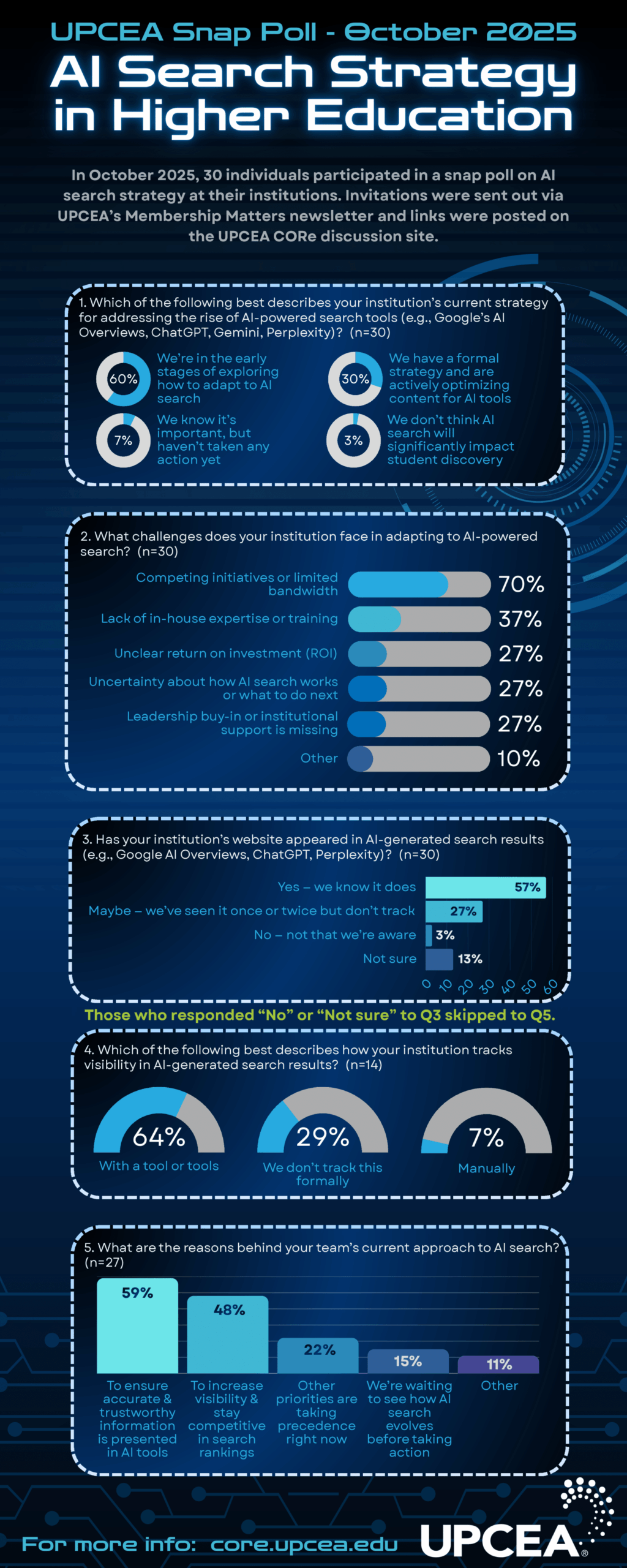

If student search trends are moving quickly, institutional readiness is moving much slower. Conducted with 30 UPCEA members, the UPCEA x Search Influence Snap Poll reveals a sector that understands the importance of AI search but lacks operational capacity to support it.

Most institutions know AI matters. The question is whether they have the bandwidth, expertise, and internal alignment to respond.

Awareness is high, execution is thin

The majority of institutions recognize that AI is reshaping the discovery process. But knowing and acting are two very different things.

- 60% are in the early stages of “exploring” AI search

- 30% have a formal AI search strategy

- 10% haven’t started or don’t believe AI will impact program discovery

“Exploring” signals curiosity, but not implementation. A formal strategy requires solid infrastructure (ownership, processes, consistency), and that’s where many institutions are falling behind.

Without clear ownership, AI search becomes everyone’s concern and no one’s responsibility. Marketing assumes IT will handle technical implementation. IT assumes marketing will define content standards. Enrollment assumes someone else is tracking whether programs appear accurately in AI summaries. Meanwhile, competitor institutions with defined workflows are reinforcing their visibility signals daily.

The barriers are structural, not philosophical

No one is debating whether AI-driven search matters. The bottlenecks are operational:

- 70% cite limited bandwidth or competing priorities

- 36.67% cite lack of in-house expertise or training

- 26.67% cite unclear ROI or uncertainty about AI mechanics

AI systems evolve monthly. Waiting six months to decide “what to do” means falling behind institutions that have already begun reinforcing their information. Every month your program data remains inconsistent is another month AI models learn to trust competitor sources instead of yours.

Visibility tracking is still inconsistent

When asked whether their institution appears in AI-generated answers:

- 56.7% said “yes”

- 26.7% said “maybe”

- 13.3% said “uncertain”

Only 64.29% of those tracking use structured methods, like formal AI SEO tracking tools.

If institutions don’t know when or how they’re appearing, they also can’t know:

- Whether AI summaries are accurate

- Whether competitors appear more frequently

- Whether certain programs are misrepresented

Guessing is not a visibility strategy. Without structured monitoring, institutions can’t identify which programs underperform in AI search, can’t track whether content updates improve citation frequency, and can’t benchmark their visibility against regional competitors.

Early movers are motivated by accuracy & competition

Among the institutions already adopting an AI search strategy:

- 59.26% want to ensure the accuracy of AI-generated information

- 48.15% are focused on visibility and competitive positioning

Meanwhile:

- 22.22% say other priorities rank higher

- 14.81% are “waiting to see what happens”

The difference between these groups is trajectory. Early adopters move ahead while others accumulate visibility debt, the long-term disadvantage that forms when AI systems learn from competitors instead of from you.

Institutions waiting for clearer ROI data or more mature tracking tools are making a strategic bet that the cost of delay is lower than the cost of early action. For some, that bet may prove correct. For most, it won’t.

Source: AI Search Strategy in Higher Education — Snap Poll, October 2025

Core Components of a Modern Higher Education AI Search Strategy

Generative search engines don’t reward creativity. They reward clarity. Winning page one and the AI Overview depends on whether a program’s information is consistent, structured, and reinforced everywhere a student or AI tool might encounter it.

1. Establish program data consistency as the institutional source of truth

AI models may “misstep” when foundational information is inconsistent. If your academic catalog lists a different cost than the program page, or if PDFs still reference old admissions cycles, AI defaults to whichever source appears most stable, and that isn’t always your institution.

Your information cleanup has to start with the facts:

- Cost — tuition, fees, financial aid opportunities, and total program investment

- Duration — credit hours, typical completion time, and pace options

- Modality — online, hybrid, in-person, or flexible formats

- Requirements — prerequisites, application materials, and admission standards

- Outcomes — employment rates, salary data, and career paths

When these details are aligned across catalogs, PDFs, program pages, and third-party listings, AI no longer has to choose between conflicting answers. Consistent facts increase trust, and trust improves visibility.

Program pages can’t be accurate until the underlying data is

Data consistency isn’t a content problem. It’s a governance problem. Most institutions store program information in multiple systems: student information systems, content management systems, PDF repositories, third-party directories, and marketing automation platforms. Each system may reflect different update cycles, approval processes, and data owners.

The solution isn’t consolidating all systems into one platform. It’s establishing a single source of truth for core program attributes and building workflows that propagate updates across all systems simultaneously. When tuition changes, that change should flow to every digital property within hours, not weeks.

2. Build an AI-readable content architecture on program pages

Even perfectly aligned information can underperform if the page structure makes it hard for AI to interpret. Generative tools scan for clarity, hierarchy, and explicit answers to common user queries.

Pages are strongest when the essentials sit in predictable, machine-readable patterns:

- Clean headings that signal structure and topic boundaries

- Modular sections focused on single topics without mixing concerns

- Concise explanations that answer specific questions directly

- Scannable details formatted for quick extraction (tables, lists, definition blocks)

Students prefer this structure, too. Clear sections for cost, schedule, outcomes, and requirements shorten the time between interest and understanding. AI prefers the same format because it speaks its “language” and reduces ambiguity.

When program pages feel like reference material rather than brochure copy, visibility improves

AI-readable architecture doesn’t mean stripping personality from your content. It means organizing information so both humans and machines can extract what they need quickly. You can still include testimonials, brand messaging, and storytelling, but those elements should supplement structured information, not replace it.

Consider how AI extracts content. It doesn’t read your page top to bottom like a human. It scans for semantic patterns, identifies chunks that answer specific queries, and evaluates whether those chunks are self-contained and coherent. A program page that buries cost information in the middle of a narrative paragraph underperforms compared to one that lists cost in a clearly labeled section with supporting context.

3. Strengthen program entities through cross-site signals

AI search isn’t keyword-based. It’s entity-based.

Models build their understanding of a program by connecting signals across your entire ecosystem. If terminology shifts from page to page, if key details appear in one place but not another, or if older content contradicts newer versions, entity confidence drops.

Cross-site reinforcement matters:

- Consistent terminology across all digital properties

- Internal linking that clarifies relationships between programs, departments, and requirements

- Schema markup that defines program attributes in a machine-readable format

- Repetition of essential facts across relevant pages to reinforce entity stability

The more stable and coherent the entity, the more likely AI is to cite it.

This is how institutions move from “we sometimes appear” to “AI consistently references us”

When a student asks an AI tool, “What are the prerequisites for the MBA program at [your institution]?”, the AI doesn’t just check your MBA page. It checks every page where those prerequisites might be mentioned: department pages, catalog entries, PDF documents, and FAQ sections. If those sources conflict, AI either omits your institution or presents the information with lower confidence.

Schema markup amplifies entity strength by explicitly defining relationships. When you mark up your MBA program with structured data that identifies its parent department, associated faculty, duration, and cost, you help AI understand not just what the program is but how it fits into your institutional structure.

4. Implement ongoing AI visibility monitoring & data hygiene

AI visibility is not a one-time project. Models update frequently, and each update reshapes which programs they surface, how they phrase details, and which institutions they trust.

Monitoring needs to be ongoing and structured around:

- Citation frequency — how often your programs appear in AI responses

- Accuracy of summaries — whether AI-generated descriptions match your current information

- Sentiment and positioning — how your programs are characterized relative to competitors

- Competitor visibility — which institutions are appearing when yours aren’t

This level of tracking enables institutions to identify patterns early, correct inaccuracies quickly, and establish authority over time.

About Search Influence’s support with AI visibility tracking

Unsure where to start? Our team provides structured AI traffic reporting that shows how your programs appear across generative platforms and where inconsistencies may be affecting trust. As your tracking partner, we can help your institution gain clear visibility into patterns, changes, and gaps, helping you prioritize the data and content updates that strengthen your position in AI search engines.

Get the research shaping modern AI search. →

FAQs About Higher Education AI Search Strategy

How does AI decide which programs to cite?

AI looks for information that is consistent, structured, and verifiable across multiple pages and sources. Programs with aligned facts and clear architecture are more likely to appear.

How can leadership be convinced to prioritize AI search visibility?

Show the connection between visibility and enrollment. Students are forming opinions before they reach institutional websites, and institutions that don’t appear in AI-generated search results lose those early moments of influence.

How often should institutions audit program pages for AI readiness?

Quarterly, if not more. AI models update rapidly, and audits ensure that key information remains up to date, content structure remains clean, and external listings don’t drift out of alignment.

What KPIs indicate improvement in AI discoverability or trust?

Citation frequency, accuracy, sentiment, and competitor comparisons. These KPIs signal whether AI considers your institution a stable source of truth.

Students Have Shifted Their Discovery Habits. Your AI Search Strategy Must Catch Up.

AI isn’t a future threat. It’s an active influence in how prospective students search for programs and decide which institutions to trust.

Your student search guide

Based on survey data from 760 prospective adult learners, the UPCEA x Search Influence AI Search in Higher Education Study offers the most comprehensive view of how prospects utilize AI for program research.

Use the data to:

- Align leadership around AI search priorities

- Identify priority programs for content optimization

- Plan FY26 content investments with confidence

- Benchmark readiness against peer institutions

- Strengthen your visibility signals across search engines and AI tools

AI search is already shaping enrollment. Institutions that build their strategy now will lead the next phase of visibility.

Download the full study to get the complete data set and a clearer view of how to modernize your AI search strategy.